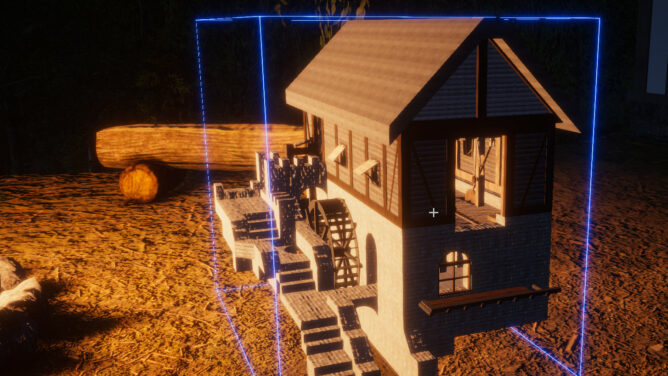

With #RisingWorld (Unity) improving a lot lately we’re feature wise almost on par with the old Java version again. Due to my hobbies I’m playing on the #medieval server https://medievalrealms.co.uk/ where I usually construct buildings based on specific periods according to my understanding of timber-framed constructions. Which may not be the best to rely on but hey, it’s a game after all.

One of the features still missing is an ingame map. We do have the compass already though and with debug enabled we even get an exact position on the current map. And the new maps are huge! And since we’re building here in multiplayer it’s no wonder that this is a dire missed feature to get an idea where the others are and what they are building, because it’s not fun navigating with X,Y,Z alone to visit other players (and keep note of where the own spot is located).

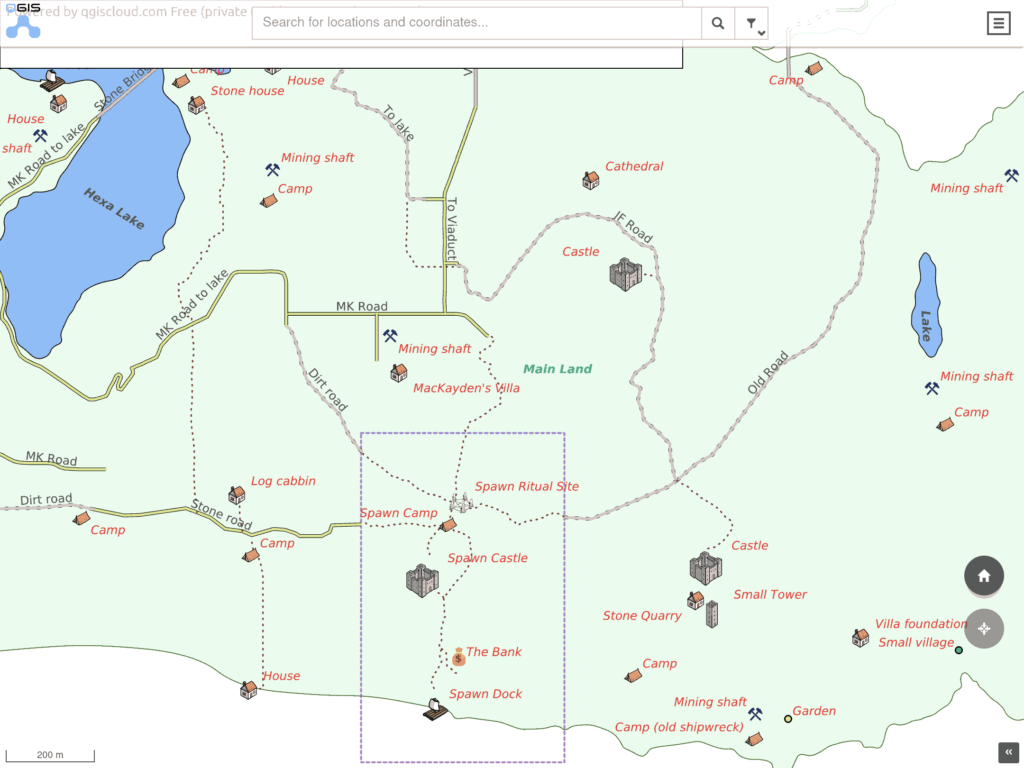

So I was intrigued to see that the player @Bamse did what gamers tend to do when a feature is missing. They start some sort of helper app (or wiki or whatever). This resulted in a #QGIS Cloud map project at https://qgiscloud.com/Bamse/MapMedievalRealms/ where players from the same server may add POIs and do the leg work of surveying the “new” world.

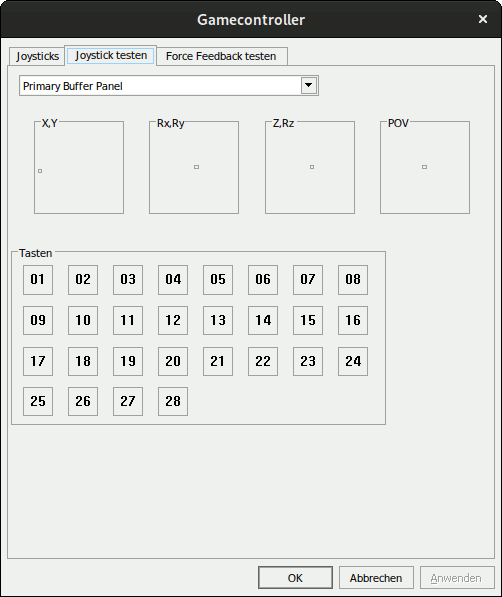

The only drawback (haha. sorry.) is: It’s a PITA to do the surveying because stopping every few meters to note down a bunch of coordinates takes hours! Someone had to do this though, because “my” isle – a piece of rock I randomly stumbled over after the latest server reset – was still missing! And while I clocked roughly ~700h on this game already I was not going to do that. I’m a programmer – which equals to lazy in my opinion. So I started scripting and after a few minutes came up with the following still crude solution:

echo "" > move.log

while true; do

gnome-screenshot -w -f /tmp/snapshot.png && convert /tmp/snapshot.png -crop 165x30+905+975 /tmp/snapshot-cropped.tiff && tesseract /tmp/snapshot-cropped.tiff - -l eng --psm 13 quiet | awk 'match($0, /([[:digit:]]+[.][[:digit:]])+.([[:digit:]]+[.][[:digit:]]+).([[:digit:]]+[.][[:digit:]]+)/) { print substr($0, RSTART, RLENGTH)}' | awk '{ printf "%0.0f,%0.0f,%0.0f\n", $1, $2, $3}' >> move.log

sleep 2

doneThis surely can be improved a lot but… minimum viable product. We’re still talking about a game. Here is what it does:

* Take a screenshot of the active window (Rising World while playing)

* Save it to /tmp (that’s in my RAM disk)

* Crop out the coordinates and convert it to tiff using `imagemagick`

* Run `tesseract` for OCR detection

* Pipe the result to awk and use a RegEx to identify three numbers

* Reformat the 3 numbers (remove the precision) and dump it in as csv-like log

* Sleep for 2 seconds and repeat until terminated

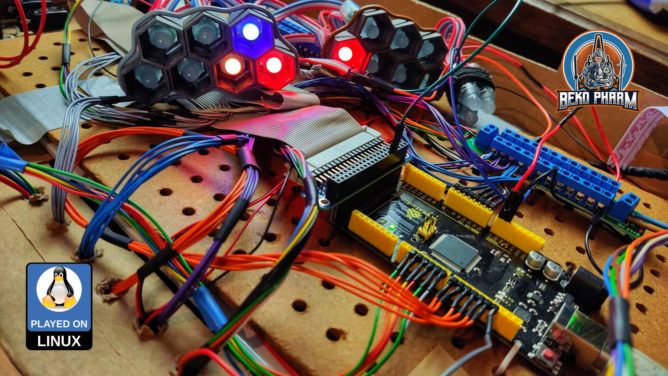

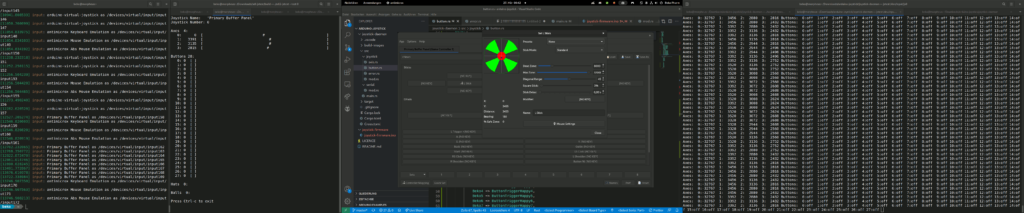

And in case you wonder why I used gnome-screenshot: I’m on #Wayland and the usual suspects written for X do simply not work. I did recompile gnome-screenshots tho to disable the annoying flashing though so it’s silent now.

Why the awk program? Well, tesseract is good but the raw data looked something like this in the end and the RegEx cleans that up somewhat:

serene ep)

9295.2 95.4 2828.0 |

9295.2 95.4 2828.0 |

9296.4 95.4 2828.5 |

nn

9303.1 95.4 2838.5 |

9295.0 98.4 2857.65

9289.1 98.7 2868.1 (7

9296.5 96.7 2849.0 |»

9301.1 95.4 2835.5 |

9301.1 95.4 2835.5 |

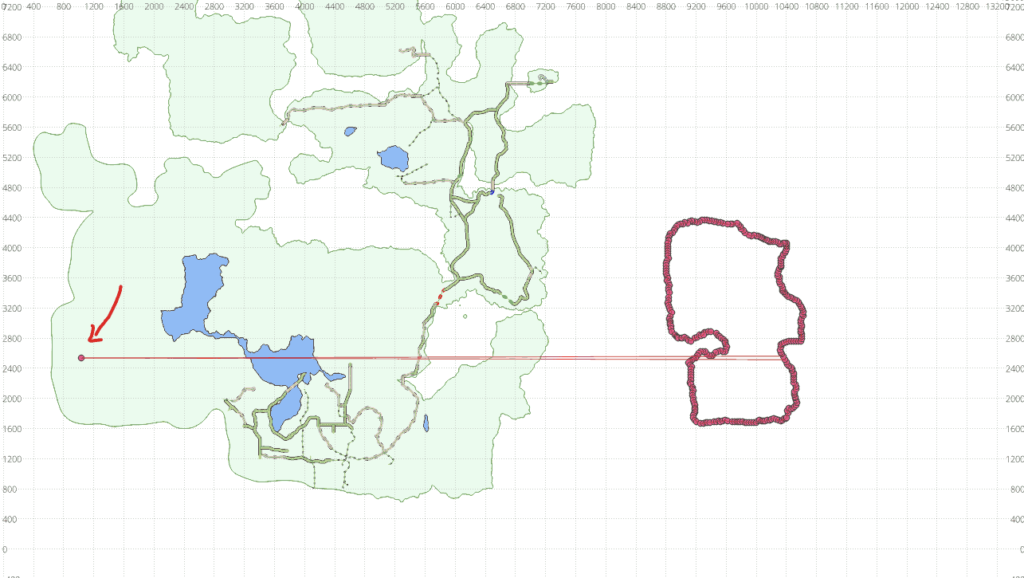

nnSo I put this to a test and jogged around “my” isle and here are the results:

One(!) data point was misread during the ~15 minutes run. Not too shabby! That could easily be fixed manually and who knows… mebbe I’ll improve on the script to check for implausible spikes like that at some point.

I demoed the script to other players on the same server and some already started investigating into solutions to adapt this script to Windows. Just don’t ask me how to do that – I really wouldn’t know 😛

Updated 10th Dec 2022: A solution to do the same on Windows PC appeared on https://wiki.calarasi.net/en/public/medievalrealms/ocr-coordinates